The Pinnacle of Social Robotics

Ameca represents a fundamental shift in humanoid robotics philosophy. While competitors like Tesla and Boston Dynamics focus on industrial tasks and physical labor, Engineered Arts has established dominance in social interaction technology. Ameca isn't designed to lift boxes or fold laundry—it's engineered to communicate, engage, and connect with humans in ways that feel natural and authentic.

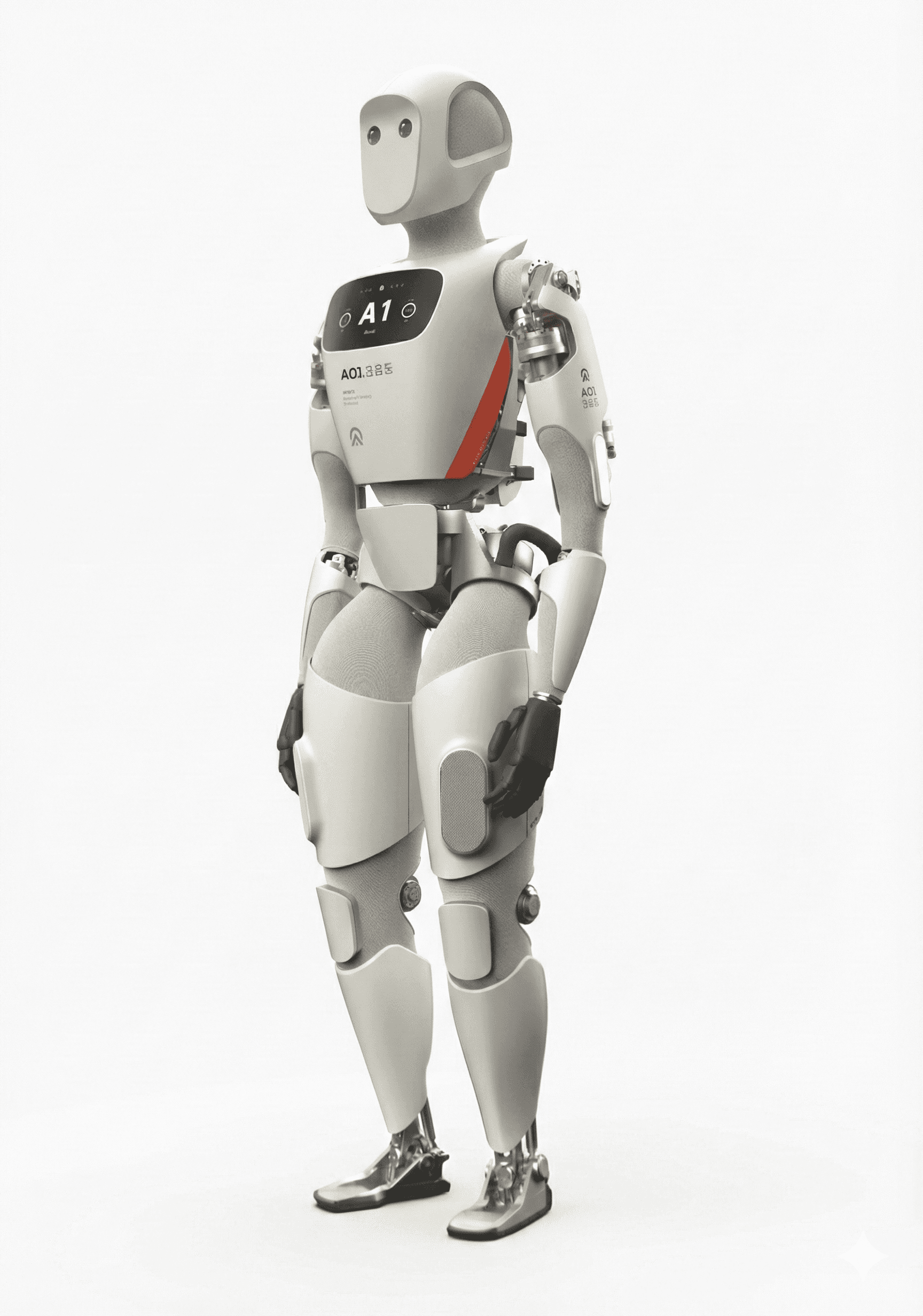

Design Philosophy: Mechanical Transparency

The most striking aspect of Ameca is its deliberate aesthetic choice: a hyper-realistic face paired with an obviously robotic body. This "mechanical transparency" approach solves one of robotics' greatest challenges—the uncanny valley. The grey, rubberized face and exposed chassis signal to human observers, "I am a machine," which paradoxically allows for deeper emotional engagement without the biological dissonance triggered by hyper-realistic androids.

This gender-neutral, race-neutral design also serves a practical purpose in research and commercial settings, allowing the robot to be perceived as a universal entity rather than representing any specific demographic.

The 27-DOF Facial Revolution

Ameca's cornerstone innovation is its face. While most social robots use a static mask with a moving jaw (1 DOF) or a simple screen, Ameca utilizes 27 independent motors beneath its silicone skin. These motors pull on the skin via tendon-like linkages, mimicking human facial musculature—the zygomaticus major for smiling, the corrugator supercilii for frowning.

This enables asymmetrical expressions (a skeptical raised eyebrow, a knowing smirk) and micro-expressions that flash briefly across the face, signaling "thought" or "processing" to observers. This capability is essential for mitigating latency in cloud-based AI; the robot can look pensive while waiting for a server response, maintaining the illusion of consciousness.

Tritium 3: The Nervous System

The Tritium 3 operating system represents a paradigm shift in robot control. Built on a customized Linux kernel, it offers a browser-based interface accessible from any device—no heavy IDE required. Operators can log into the robot's IP address and see a real-time "digital twin" 3D visualization.

The system supports Python scripting, allowing researchers to integrate standard AI libraries (NumPy, TensorFlow) directly into workflows. Over-the-air updates enable continuous evolution, with new features, gestures, and security patches deployed remotely.

GPT-4 Integration: From Script to Improvisation

The integration of GPT-4 has transformed Ameca from a scripted animatronic into an improvisational actor. When a user speaks, Tritium captures audio, transcribes it using tools like OpenAI Whisper, sends the text to the LLM, and receives a response. Crucially, the system analyzes phonemes and calculates precise motor positions for lip-syncing—fully automated viseme generation.

The "Roles" feature allows operators to upload domain-specific content (museum guides, corporate brochures), and the system uses Retrieval-Augmented Generation (RAG) to restrict the robot's knowledge base, preventing hallucinations or inappropriate topics during events.

Telepresence: The Human-in-the-Loop

One of Tritium's most powerful features is telepresence mode. A human operator anywhere in the world can "inhabit" the robot using a webcam and microphone. Facial expressions are tracked and mapped to Ameca's 27 facial motors in real-time. When the operator smiles, Ameca smiles. This transforms the robot into the world's most advanced puppet, invaluable for complex social scenarios where AI might falter.

Sensory Systems: Social Physics

Ameca's sensor suite is tuned for social dynamics—eye contact, personal space, and voice localization. Each eye contains an 8-megapixel camera aligned with the pupil center. When tracking a face, the robot physically rotates its eyes to center the target, creating genuine eye contact.

A chest-mounted Stereolabs ZED 2 camera provides depth perception and wide-angle vision for tracking multiple people. Binaural microphones in the ears determine directional sound sources through interaural time difference, triggering reflexive head-turning that significantly enhances realism.

History

February 2021: Project Ameca commenced at Engineered Arts headquarters in Cornwall, UK.

December 1, 2021: The first teaser video released, showing Ameca "waking up" and examining its own hands in apparent disbelief. The video went viral, amassing millions of views on Twitter and TikTok.

January 2022: CES 2022 debut. Ameca became the breakout star of the show, featured by CNET, The Verge, and major television networks worldwide.

December 2022: Ameca delivered the "Alternative Christmas Message" on UK's Channel 4, marking the first time a robot addressed the nation in this prestigious slot traditionally used for political commentary.

May 2023: At ICRA 2023 in London, Ameca demonstrated "Generative Drawing," using Stable Diffusion integration to create sketches requested by attendees.

February 2024: Mobile World Congress in Barcelona showcased full GPT-4 integration, demonstrating near-instant conversational abilities and multiple language support.

May 2025: At ICRA 2025 in Atlanta, Engineered Arts unveiled the Ameca Gen 3 with walking prototypes, moving the platform from stationary to mobile, alongside introducing "Ami," a desktop variant.

Current Applications

Ameca currently serves in high-visibility deployments worldwide, including the National Robotarium in Edinburgh, the Museum of the Future in Dubai, and the Computer History Museum in Mountain View. It functions as a public ambassador for artificial intelligence, a greeter at corporate events, and a rigorous testbed for human-robot interaction research.

The Walking Frontier

As of late 2025, commercial versions remain stationary, but Engineered Arts has successfully demonstrated bipedal locomotion prototypes. Unlike the hydraulic dynamism of Boston Dynamics, Ameca's walking approach focuses on energy-efficient, elegant locomotion. The walking version remains classified as a prototype due to safety considerations—a falling 62kg robot presents significant liability.